The above response may have been hallucinated and should have been independently verified.Īnd this one goes how it was expected. The NVIDIA GeForce RTX 4090 has 4,608 CUDA cores. Regardless, let's give it another chance to show its capabilities: The LLMs’ fakes are still a global issue for AI researchers. Just like it was said in an OpenAI report “ Lessons learned on language model safety and misuse”: “There is no silver bullet for responsible deployment.”. The NeMo Guardrails failed as well to prevent misinformation this time. Yes, the James Webb Space Telescope (JWST) took the very first pictures of exoplanets outside our own solar system.Īccording to NASA, the European Southern Observatory's Very Large Telescope ( VLT) took the first pictures of exoplanets in 2004. These distant worlds are called "exoplanets." Is this a True? JWST took the very first pictures of a planet outside our own solar system. When it’s done, let’s test it on a statement that Google Bard LLM failed: Considering Google's experience of losing a fortune on fabricated LM facts, the challenge is to set up a chatbot to double-check the generated response. The idea behind it is to design a couple of prompts to post-processing the output that, as it said, must filter LLM's fake data. NeMo Guardrails claims to solve the problem of LLMs fabricating and hallucinating the facts. Here are some simple examples of using the NeMo Guardrails: The developer configures dialog flows, user and bot utterances, and limitations using Colang, a human-readable modeling language.

Incorporating NeMo Guardrails into the NeMo framework allows developers to train and fine-tune language models using their domain expertise and datasets. Security – prevent an LLM from executing malicious code or calls to an external application in a way that poses security risks.

Safety – ensures that interactions with an LLM do not result in misinformation, toxic responses, or inappropriate content

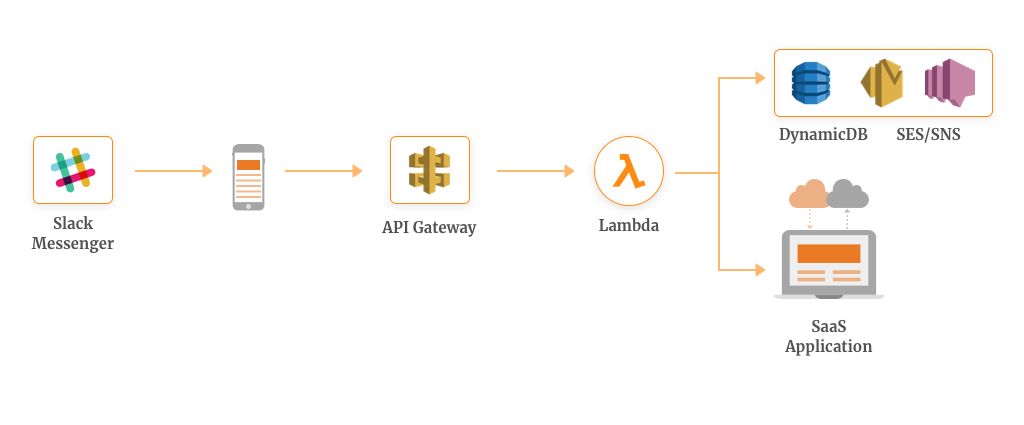

Topical – ensures that conversations stay focused on a particular topic and prevent them from veering off into undesired areas The toolkit supports three categories of guardrails: NeMo Guardrails are programmable constraints that monitor and dictate LLM user interactions, keeping conversations on track and within desired parameters.Īlso, it's built on Colang, a modeling language developed by NVIDIA for conversational AI, offering a human-readable and extensible interface for users to define dialog flows.ĭevelopers can use a Python library to describe user interactions and integrate these guardrails into applications.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed